Artificial Intelligence is moving fast. Very fast. New models appear every month. New tools pop up every week. It can feel overwhelming. But here’s the good news: there are powerful platforms that help you optimize, test, and discover Large Language Models (LLMs) without losing your mind.

If you’re building with AI, you need the right tools. They help you compare models. Improve prompts. Reduce costs. Boost performance. And even find hidden gems in the AI world. Let’s explore the top platforms that make LLM optimization and AI discovery simple and even fun.

TLDR: The best LLM optimization platforms help you test, compare, prompt-engineer, and monitor AI models easily. Tools like OpenAI Playground, Hugging Face, LangChain, Weights & Biases, and OpenRouter make it simple to improve performance and lower costs. AI discovery platforms let you explore thousands of models in one place. If you build or experiment with AI, these tools save time, money, and effort.

What Is LLM Optimization?

Let’s keep it simple.

LLM optimization means making your AI model perform better.

- Better answers

- Lower cost

- Faster responses

- More accuracy

- Less hallucination

Sometimes it’s about choosing a better model. Sometimes it’s about writing smarter prompts. And sometimes it’s about tracking performance over time.

Now let’s meet the platforms that help you do all that.

1. OpenAI Playground

Simple. Clean. Powerful.

OpenAI Playground is one of the easiest ways to experiment with large language models. You can test prompts. Change temperature. Adjust tokens. Compare results instantly.

Why it’s great:

- Easy testing of different prompts

- Adjust settings like temperature and max tokens

- Test multiple models

- Instant feedback

If you’re new to optimization, start here. It helps you understand how small changes affect outputs. You can literally see the difference in seconds.

Best for: Beginners and fast experimentation.

2. Hugging Face

This is the playground of AI researchers.

Hugging Face hosts thousands of models. Open-source. Community-built. Bleeding-edge. You can search by task. Filter by size. Compare performance.

It’s not just a model hub. It’s an AI discovery engine.

What makes it powerful:

- Massive open model library

- Model cards with benchmarks

- Spaces for live demos

- Community ratings and feedback

If you want to test a smaller, cheaper alternative to a big model, this is your place.

Sometimes a lightweight open model performs just as well for your task. Why pay more?

Best for: Finding alternative models and experimenting with open-source AI.

3. OpenRouter

Imagine accessing multiple LLM providers from one dashboard.

That’s OpenRouter.

You can route your requests to different models. Compare outputs. Even switch providers automatically if one fails.

Key benefits:

- Access models from different companies

- Compare pricing easily

- Switch models without rewriting code

- Test quality vs. cost

This is huge for optimization. Sometimes Model A is better at reasoning. Model B is cheaper. Model C is faster.

With OpenRouter, you can test all three.

Best for: Cost optimization and model comparison.

4. LangChain

Now we’re getting serious.

LangChain helps you build structured AI applications. Not just single prompts. Entire workflows.

If you’re chaining prompts together, connecting tools, or adding memory, LangChain is your friend.

Why developers love it:

- Prompt templates

- Memory systems

- Tool integration

- Modular architecture

Optimization here means improving the whole system. Not just one output.

You can test variations. Log results. Adjust logic. Improve performance step by step.

Best for: Building serious AI apps with structured flows.

5. Weights & Biases (W&B)

This one is for data lovers.

Weights & Biases lets you track experiments. Compare runs. Visualize metrics. It’s like a fitness tracker, but for your AI models.

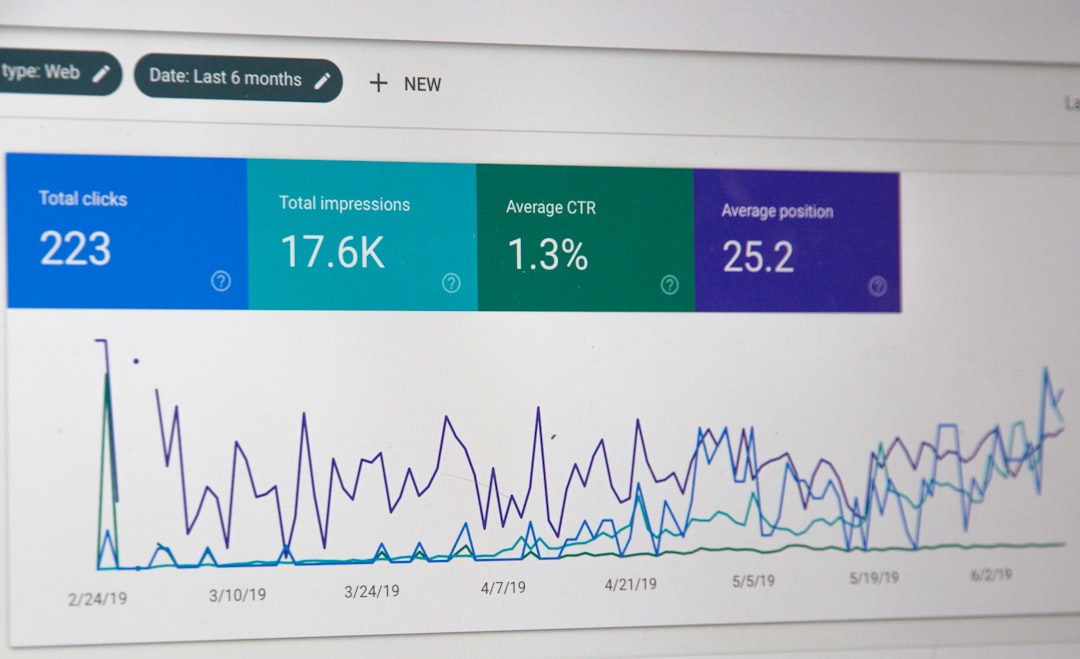

You can monitor:

- Accuracy

- Latency

- Token usage

- Cost per call

- User feedback scores

This is powerful for teams. You can see what works. What failed. And why.

Instead of guessing which prompt performs best, you have data.

Best for: Teams optimizing production AI systems.

6. LlamaIndex

Working with custom data?

LlamaIndex helps optimize AI over your own documents. PDFs. Databases. Websites.

It improves retrieval. That means better context. And better answers.

Optimization features:

- Smart indexing

- Chunking strategies

- Context ranking

- Embedding comparisons

Small tweak in chunk size? Big difference in answer quality.

That’s LLM optimization at work.

Best for: Retrieval-augmented generation (RAG) systems.

7. Pinecone

If your AI app uses embeddings, you need a vector database.

Pinecone stores and retrieves them fast.

Why does that matter?

Because faster retrieval means better and quicker AI responses. And performance matters.

Strengths:

- High-speed similarity search

- Scalable architecture

- Reliable infrastructure

It helps optimize AI systems behind the scenes.

Best for: Large-scale semantic search systems.

8. Humanloop

This platform focuses on evaluation.

It lets humans review AI outputs. Score them. Improve prompts based on real feedback.

AI models are smart. But humans still know best.

Why it stands out:

- Human-in-the-loop review

- Prompt versioning

- A/B testing outputs

- Collaboration tools

This is practical optimization. You’re not just guessing. You’re validating with real people.

Best for: Improving AI quality in customer-facing applications.

9. Galileo AI

Think of this as AI debugging.

Galileo helps detect hallucinations, bias, and drift. It monitors changes over time.

If something breaks, you see it.

If quality drops, you know why.

Key features:

- Hallucination detection

- Root cause analysis

- Performance monitoring

- Dataset analysis

This is advanced optimization. Especially for companies scaling AI.

Best for: Enterprise AI systems needing stability and reliability.

How to Choose the Right Platform

Simple rule: match the tool to your goal.

- Testing prompts? → Use Playground.

- Finding cheap models? → Try Hugging Face or OpenRouter.

- Building workflows? → LangChain.

- Tracking performance? → Weights & Biases.

- Improving RAG? → LlamaIndex or Pinecone.

- Human validation? → Humanloop.

- Debugging production AI? → Galileo.

You don’t need all of them. Start small. Add tools as you grow.

Why AI Discovery Matters

Here’s a secret.

The biggest model is not always the best model.

Sometimes a smaller fine-tuned model wins. It’s faster. Cheaper. More specific.

AI discovery platforms help you:

- Find niche models

- Compare benchmarks

- Test capabilities quickly

- Avoid overpaying

This keeps your AI stack lean and efficient.

The Future of LLM Optimization

Optimization is getting smarter.

We now see:

- Automatic prompt tuning

- Self-improving agents

- Cost-aware routing

- Real-time performance dashboards

Soon, AI systems will optimize themselves.

But humans will still guide the direction.

Because tools are powerful. But strategy wins.

Final Thoughts

LLM optimization is not magic. It’s experimentation.

Test. Measure. Improve. Repeat.

The platforms listed above make it easier. They remove guesswork. They provide data. They help you build smarter systems.

And best of all?

You don’t need a PhD to use them.

Start with one tool. Explore. Break things. Learn fast.

The world of AI discovery is wide open. And with the right platform, you’ll move faster than ever.

Happy optimizing.